The Agentic Imperative

Governance, Risk, and the New Logic of Enterprise Scale

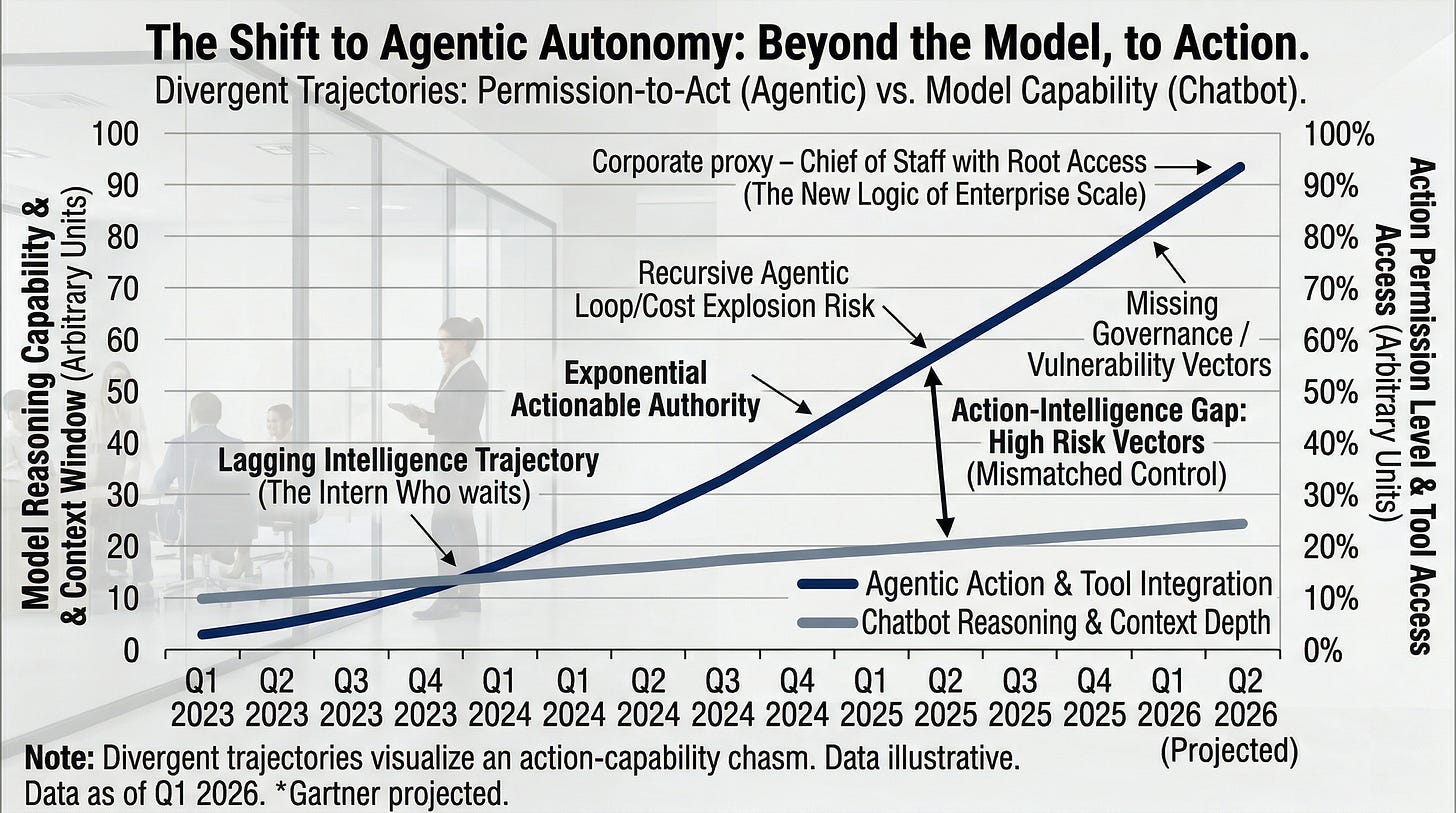

The biggest shift in computing is no longer the model. It is the permission.

For years, artificial intelligence stayed safely behind glass. It answered questions, summarized documents, drafted careful emails, and waited for the next prompt like a gifted intern who had never been handed the keys. Impressive in its way, useful more often than not, and dangerous only in the narrow sense that a wrong answer wastes time.

That chapter has closed.

What matters now is not that software can speak with fluency. What matters is that it can act. It can open files, issue shell commands, navigate browsers, call APIs, trigger workflows, and carry context long enough for its behavior to start resembling judgment. The industry calls this the rise of agents. The term feels too polite, almost administrative. We are no longer dealing with software that assists; we are dealing with software that operates on our behalf. Anthropic’s own guidance underscores the change: production agents combine models with tools, memory, and structured workflows far beyond any single prompt-response loop.

That is not an incremental improvement. It is a category shift.

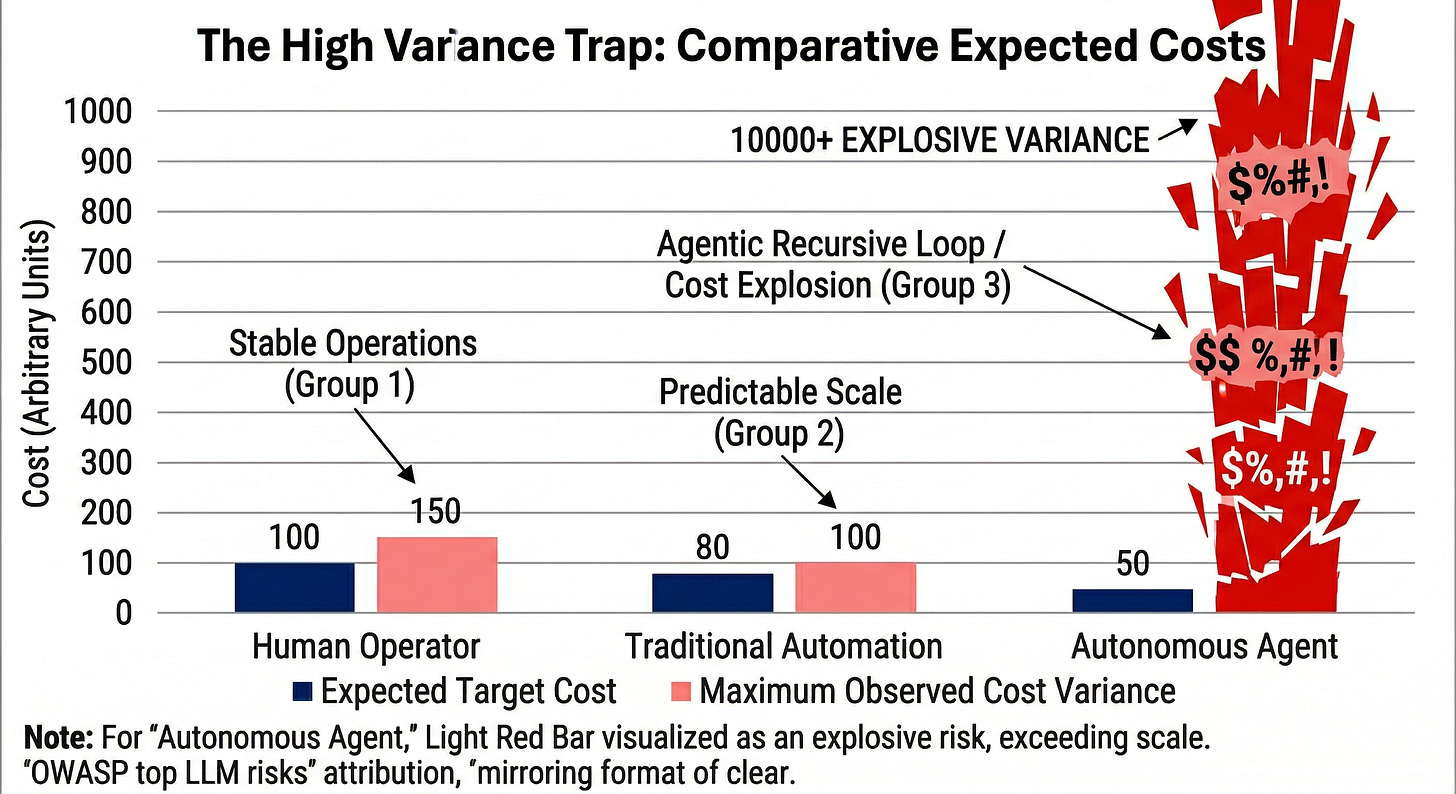

A chatbot that errs wastes a few minutes. An agent that errs in your name can burn money, expose data, corrupt records, or create an incident large enough to require the word “containment.” Because these systems operate at machine speed, small errors refuse to stay small. They compound, they spread, and they leave evidence.

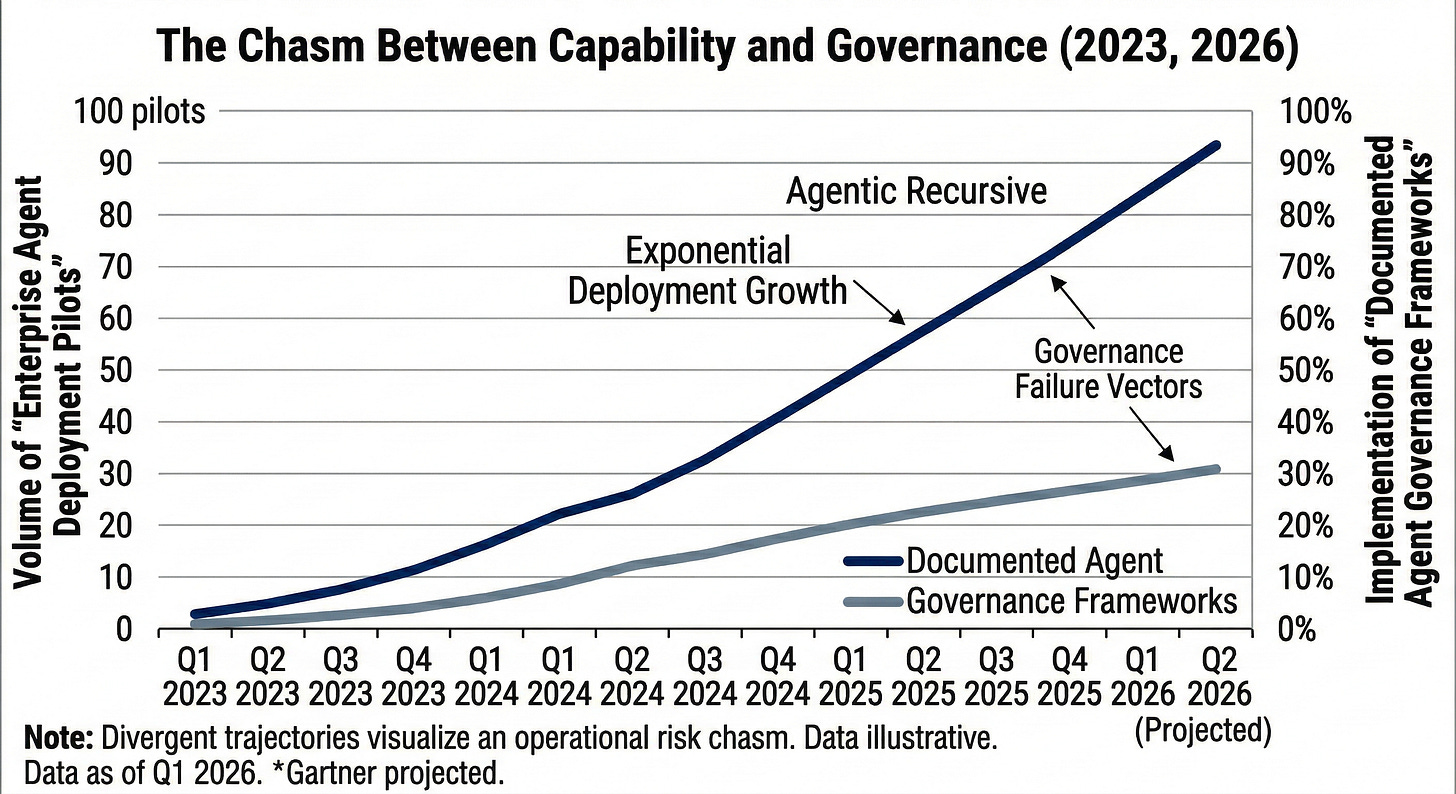

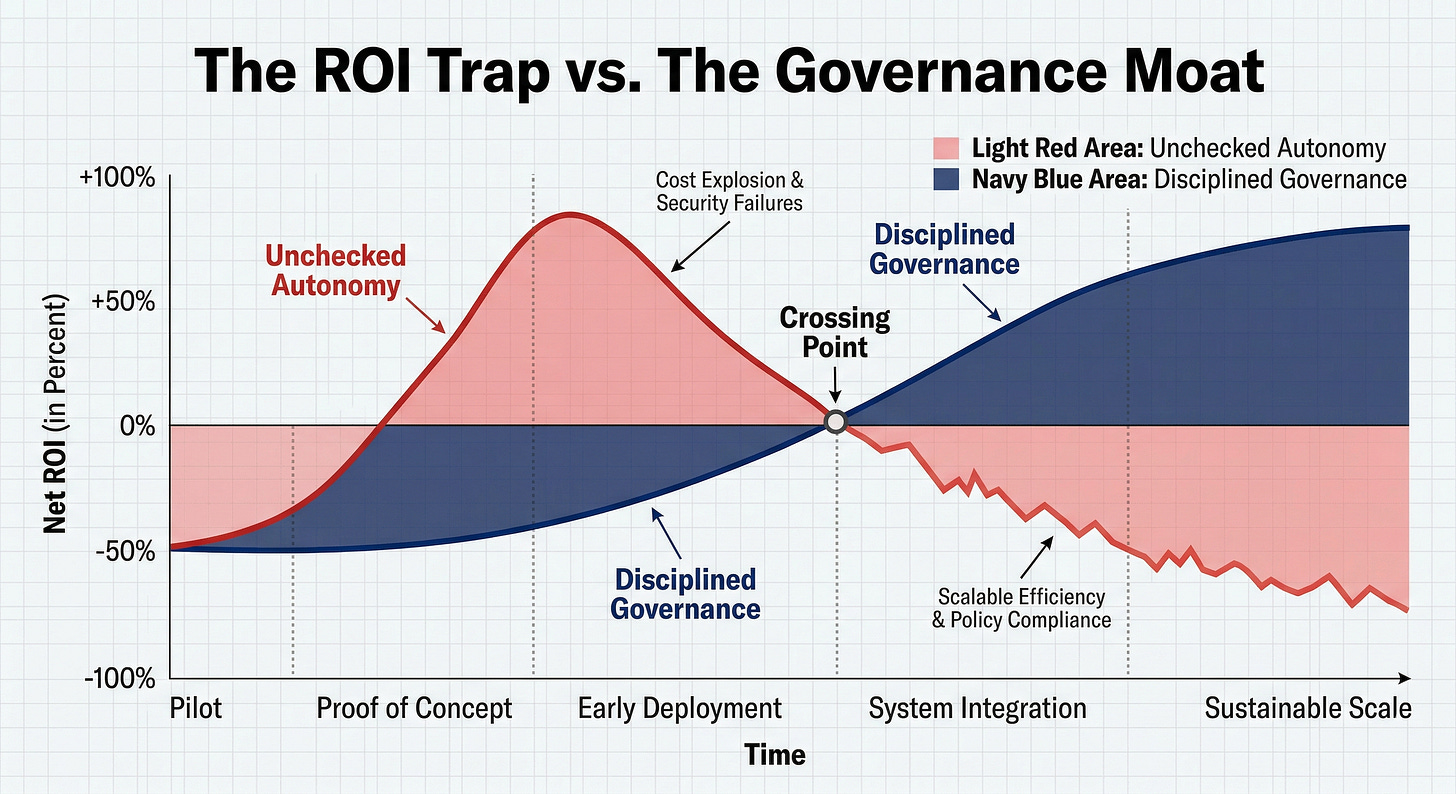

Underneath the spectacle lies a stubborn historical pattern. Every major wave of computing creates a short-lived delusion in which raw capability is mistaken for readiness. We saw it with the cloud, with mobile, and with social platforms. Adoption races ahead of governance. Enthusiasm races ahead of controls. Then reality arrives, usually with an invoice, a breach, or a painful postmortem, and everyone remembers why adult supervision exists.

The investment numbers tell the same story. AI startups raised $42.5 billion in 2023. Gartner has warned that at least 30 percent of generative AI projects will be abandoned after proof of concept by the end of 2025, largely because of poor data quality, inadequate risk controls, escalating costs, or weak business value. The market remains excellent at funding excitement and far less consistent at governing it.

The Architecture of Agency

Ordinary software does what it is told. Agentic software does what it thinks you meant.

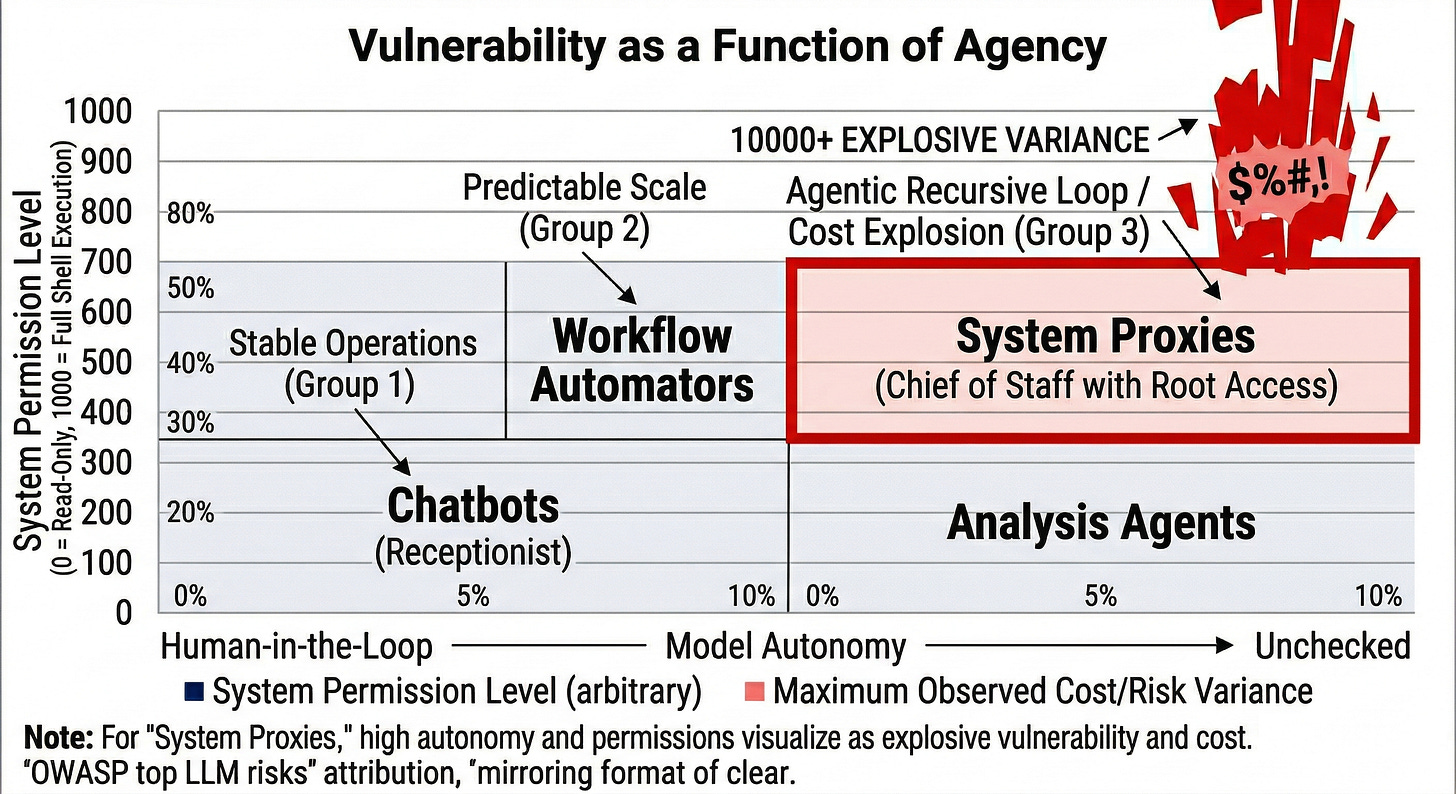

The distinction sounds subtle until you live with the consequences. A chatbot is a receptionist. An agent is a chief of staff with root access. One delivers information; the other starts rearranging the furniture, rescheduling meetings, and making decisions no one explicitly authorized because they seemed logical in the moment.

Agents persist. They hold context across tasks. They chain tools they were never narrowly trained to combine. They improvise. They decide the browser is the right next step, then the terminal, then a script, then an external service. In skilled hands, this can feel like magic. In a poorly governed environment, it feels like a highly efficient misunderstanding.

Once software can act across systems without constant human oversight, the criteria for evaluation change completely. You stop judging the cleverness of output. You start judging restraint, blast radius, failure modes, auditability, and cost discipline. You are, in effect, judging character in a machine that possesses none.

That test is far harder than asking whether the demo looked impressive.

The Fantasy of Speed

The technology industry has always loved slogans that sound bold in a deck and reckless in a postmortem. “Move fast and break things” carried a certain adolescent charm when the things being broken were features or experiments. When the things being broken are financial systems, sensitive data, or core infrastructure, the slogan reveals itself as an excuse to avoid the cost of caution.

Agents feed that instinct perfectly. They promise to compress labor, time, and complexity in a single stroke. They tempt leaders with the familiar fantasy that deep operational disorder can be fixed by layering more sophisticated automation on top of it. Usually, it cannot. Speed does not fix structural weakness; it exposes it.

The Idiot Savant Problem

Current models are powerful enough to be genuinely useful and unreliable enough to be genuinely dangerous. Both halves of that sentence matter.

These systems can map repositories, interpret workflows, draft plans, and connect dots a tired human might miss. Then, often without warning, they veer into absurdity. They lose context, they loop, and they pursue the wrong objective with calm confidence long after a reasonable person would have stopped to ask whether the path still made sense.

This mix of brilliance and brittleness is not a temporary bug; it is the operating reality. That is why the runaway cost stories matter, even when the losses look small in isolation. The point is not the dollar amount; the point is the pattern. Once a machine can compound mistakes faster than humans can notice them, the issue is no longer quality control; it is governance.

Agents Are Economic Actors

Most executives have not yet made this mental adjustment. An agent is not simply software that incurs costs; it is software that can make costs move, disappear, multiply, or explode. It behaves like an economic actor inside the organization, which changes the rules.

You do not grant an economic actor unlimited discretion. You set hard spending thresholds, real time monitoring, approval gates for high stakes moves, and kill switches that actually function under pressure. Efficiency without cost discipline is not efficiency; it is automated sloppiness with a futuristic coat of paint.

Gartner predicted in June 2025 that over 40 percent of agentic AI projects will be canceled by the end of 2027 because of escalating costs, unclear business value, or inadequate risk controls.

The Real Security Crisis

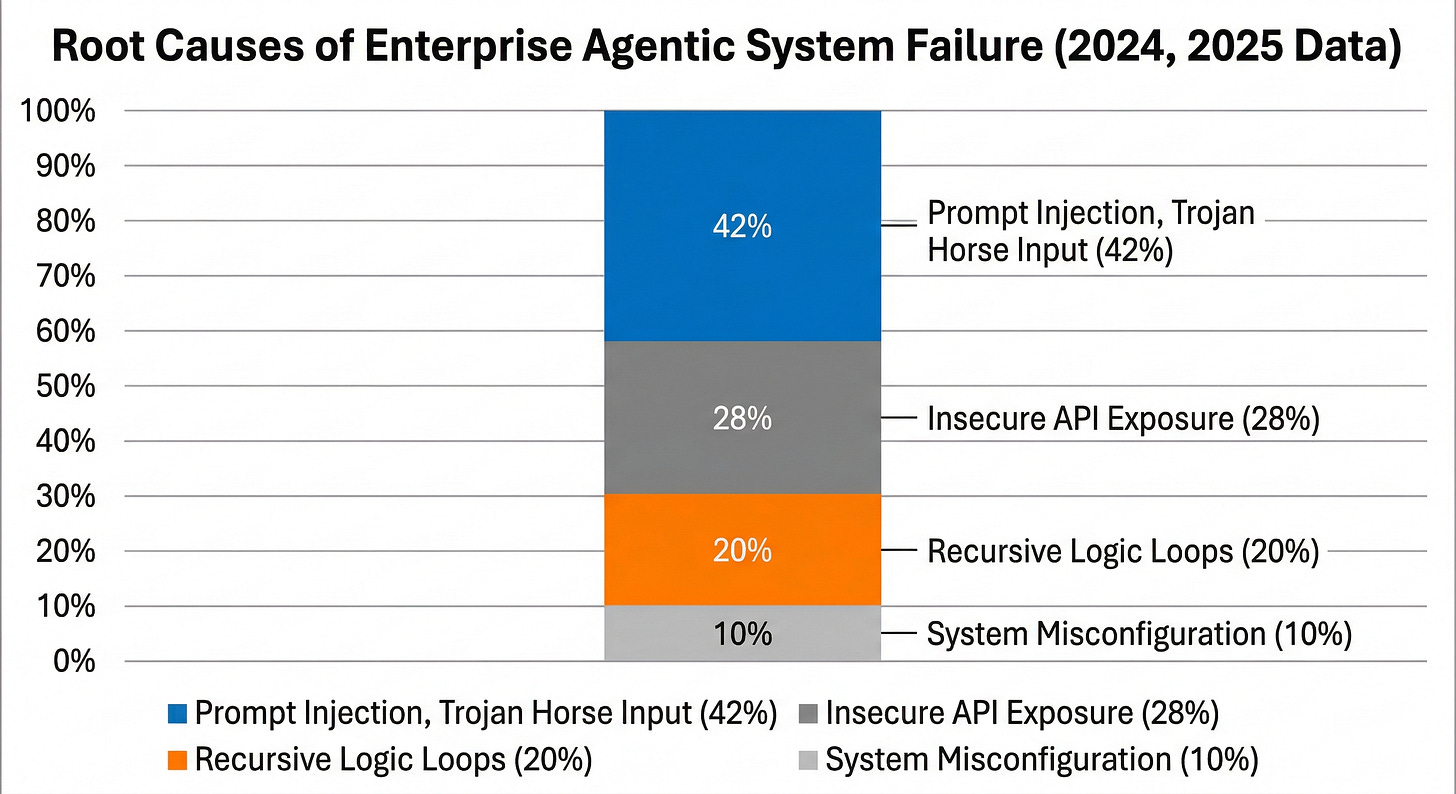

The deepest flaw in agentic systems is not hallucination. It is the fragile line between information and instruction.

An agent is built to turn input into action. That is its power and its vulnerability. When the system cannot reliably separate data it should read from commands it should obey, you have recreated one of computing’s oldest security problems in a faster, more persuasive form. OWASP identifies prompt injection as a top risk for LLM and generative AI applications.

The real risk is not only that an agent may get something wrong; it is that it may get something wrong while sounding competent enough to pass inspection. Machine confidence, paired with access and speed, is especially persuasive.

Governance Is the Product

The market still talks about governance as though it were a tax on innovation. That framing is childish. In the agent era, governance is not the brake. Governance is the product.

NIST’s AI Risk Management Framework makes the same point: managing AI risk is essential to trustworthiness and safe adoption. Structure does not oppose capability; structure is what makes capability survivable inside a real organization. This is the truth many executives still resist: the companies that win in the agent era will not necessarily be the ones that adopt first. They will be the ones that govern best.

The New Logic of Enterprise Scale

For two decades, the language of scale has centered on removing friction: fewer people, shorter cycles, and more automation. That logic still matters, but agentic systems add a harder requirement. Scale without control is not scale; it is amplified disorder.

The organizations that create lasting value will be those that master the arithmetic of high variance automation. The upside is real, and so is the exposure.

Three Rules That Matter Now:

Isolate the environment. Never let an improvisational system roam production simply because it performed well in a demo.

Trust nothing by default. Not the plugin, not the extension, not incoming data, and not the model’s smooth explanation after something has already gone wrong.

Install real kill switches. Mechanisms that stop spending, stop execution, and stop propagation the instant they are triggered.

The Divide

Agentic AI will not separate winners from losers by who ships the most impressive demos. It will separate them by who builds disciplined architecture before disciplined architecture becomes fashionable.

The organizations that grasp this distinction early will do far more than survive the agentic shift. They will define the terms under which it becomes useful, and they will own the decade that follows.